Interview Series: In Conversation with BiblioTech Hackathon Participants

The following interview was conducted by Sam Goven, a master’s student in Journalism at KU Leuven, with Roberta Pireddu, team leader of the BiblioTech Hackathon project PostScript. Roberta provides academic support for the Master in Digital Humanities at KU Leuven. Roberta’s team worked with the postcard collection. You can learn more about the team’s work by having a look at their project poster in the BiblioTech Zenodo community and by visiting their project website.

The BiblioTech Hackathon is a 10-day event organized by KU Leuven Libraries and the Faculty of Arts. Students, researchers, and staff members of KU Leuven worked in multidisciplinary teams with digitized collections from KU Leuven Libraries. The theme of the 2026 edition was travel, which was reflected in the selected datasets: historical postcards and historical travelogues. More information about the hackathon and its results can be found on the BiblioTech 2026 website.

Congratulations on winning both the first prize and the public’s favorite! Can you tell me a bit about what first drew you to the hackathon, and have you participated in one before?

I hadn’t participated in a hackathon before, but I had organized a small one myself. It was for a project on Artificial Intelligence and its application in the cultural heritage sector. I knew a lot about the organizational aspects, but not much about how to actually participate in a hackathon. What I mainly did then was observe the other groups: what they were doing and how they came up with their projects. So I was mostly involved from the sidelines.

As for why I participated: I’m currently praktijkassistant and teaching assistant for the Master in Digital Humanities, and digital humanities students are an important target group for the BiblioTech hackathon. Taking part myself allowed me to work on a project together with the students. I also already knew the postcard collection, as I had worked with it in the past, and I thought it would be nice to create something new using that material.

And your own background is in Digital Humanities as well?

Yes, that’s right. I studied Digital Humanities in Leuven, and before that I studied history, more specifically medieval history, so my background is very much in the humanities. I’ve mainly worked with heritage collections, like the ones that were used for this hackathon.

I already mentioned you won the first prize with your project. Could you describe it in a nutshell?

Our team worked with the postcard collection, which is a very large one, and visually very attractive. It’s rich in information, with a lot of detail in the metadata, but because of its size it can be quite difficult to really explore all of those details.

What we wanted to create was a kind of website or digital space where people could explore the collection more easily and from different perspectives. We chose three main perspectives. One of them, for example, is a map, where users can see the locations represented in the collection and then zoom in on the details. On the website, users can also explore specific elements, like all the trains in the collection, all the cars, parks, and so on.

In addition, we created a crowdsourcing section. We wanted to include user participation so that the collection could be enriched with additional information. For example, on the back of the postcards there are greetings, and we wanted to allow users to transcribe or translate those messages so they could be added to the metadata.

You were the team leader of your group. Was this role in line with what you had expected?

I expected that I would need to give structure to the team: define the focus of the project, set concrete steps, and remind everyone of deadlines. In the end, though, everything developed very organically and smoothly, and I was really happy with how it worked out.

At the ‘Meet the Data, Meet the People’ event, you were introduced to the data for the first time. How did the brainstorming process go?

At first, it wasn’t very clear what specific skills everyone could bring to the project, or how we should approach such a large collection. That led to a lot of questions: what do we actually want to do with this collection, and what do we want to highlight?

In the beginning, we had many different ideas. We thought about working with the colors of the postcards, or focusing on locations, and that’s when the idea of using a map came up. There were a lot of possibilities. At a certain point, though, we decided that we really needed to look more closely at the dataset, see what was actually there, and then make a decision. That happened a couple of days after the opening event. We had some time to reflect, explore the data, and then settle on a clear approach.

Was it difficult to decide in which direction you wanted to go?

A bit, yes. But in the end, the direction really emerged from what we actually found in the data. As I mentioned before, we initially wanted to work with color, but when we started thinking about the kind of results that would produce, we realized it wasn’t the direction that appealed to us the most. So at some point we had to make a clear decision: okay, let’s go in this direction and really commit to it.

That said, it was still a bit challenging, because along the way new ideas kept popping up. For example, we considered adding a gamification aspect to the crowdsourcing section, where participants could earn points based on how much they contributed. In the end, we had to leave that out because of time constraints. At some point we realized, there are only three days left, how can we realistically make this work? It’s important at that stage to be realistic and say, okay, this is something we can do, and this is something we can’t.

During your final presentation at the closing event, you mentioned the educational goal of the project and its collaborative aspect. What kind of audience did you have in mind? Who should be able to use the website you developed?

We definitely had researchers in mind. The idea was to help them shape their research by giving them access to all these additional details in the collection. Because the postcard collection is so broad, it’s not immediately obvious what kind of research questions you could explore with it, and we wanted to make that easier.

At the same time, we wanted to reach a wider audience, people who are curious about Belgium’s history, about tourist places, and what they looked like in the past. Some might be interested in comparing then and now, others in seeing how streets and cities have changed, or just browsing the collection and feeling a bit nostalgic.

One thing I found very appealing was how user‑friendly the website was, it really looked like something anyone could use.

Yes, absolutely. I think a lot of people would love the idea of being able to see how a place looked in the past and compare it to how it looks now, seeing how much it has changed, or sometimes how it no longer exists at all.

The end result was a success, but did you face any roadblocks during the hackathon?

There was one issue at the beginning related to the locations of the postcards. We wanted to create a map and link each image directly to a specific place, but the coordinates were missing in the collection. So we first had to retrieve that information, and that took some time. At one point, we even thought it wouldn’t be possible. In the end, though, one of the team members managed to clean the dataset and recover the exact coordinates for each location, which allowed us to move forward.

You mentioned that this was the first hackathon you participated in. Do you feel you picked up any new skills along the way, and how might you use them in future research?

The crowdsourcing concept was particularly interesting for me. It’s something I had already worked with in earlier projects where we involved the public. For example, we showed people images, often of places in cities, and asked them to share additional information about what they saw.

What was new for me in this project was the specific crowdsourcing tool that we embedded in the website. I think that’s something I’ll definitely use again in the future. It’s very user‑friendly and easy to integrate, and the fact that it automatically produces a file with all the participants’ responses is very useful.

What advice would you give to someone who might be hesitant to participate in a hackathon because of their background?

I really think everyone can participate, because there’s a place for everyone in a hackathon, even if you don’t have strong technical skills. Whatever your background or skills, there’s always a way to contribute and find your role within the group. That might be through creative ideas, working on the poster, or helping shape the concept of the project. There’s always something meaningful you can bring to the team.

Last question: what advice would you give a team leader?

I would say don’t be too strict at the beginning. It’s important to give everyone enough space to be creative and to let people explore ideas, so that everyone’s skills can really emerge. I think the brainstorming phase is especially important, because that’s when you start to understand what each team member can do and how everyone can contribute to the project.

Congratulations one more time! It’s amazing how much each team accomplished in such a short period of time. For me, it almost felt unreal, this looked like a year’s worth of work.

Yes, exactly. For me, this could have been a thesis, the kind of results you would expect from a master’s thesis. That’s really what made it so remarkable to me.

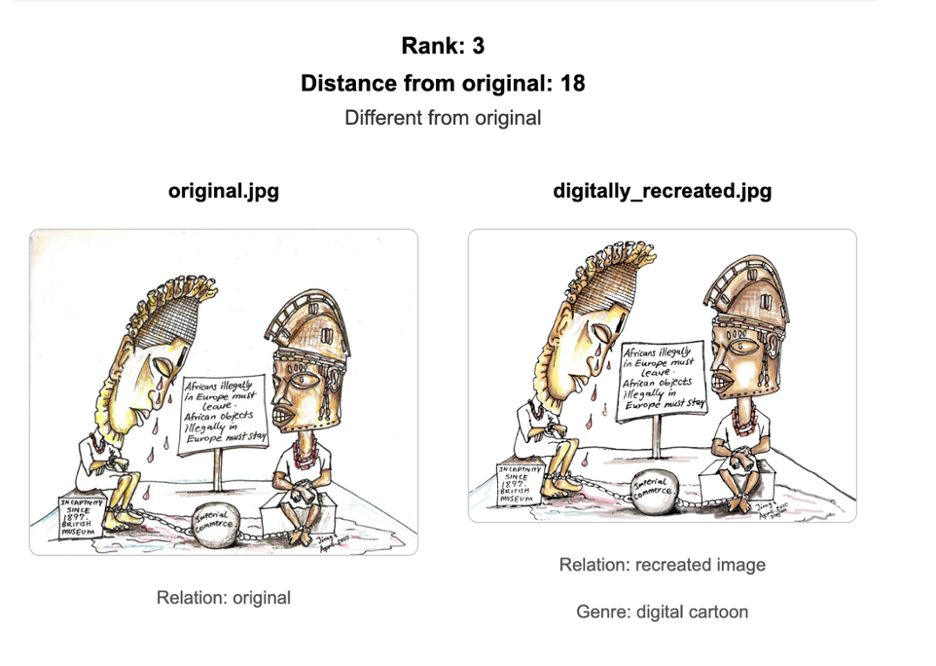

(New version on the left.)

(New version on the left.)