AI and the humanities: Across the Princeton campus, an era of collaboration is underway

By Allison Gasparini, Center for Statistics and Machine Learning, and AI Lab

Originally published on the Princeton homepage.

With the launch of the Princeton Laboratory for Artificial Intelligence and the New Jersey AI Hub, over the last few years Princeton University has firmly established its presence at the forefront of artificial intelligence research — including transformative work in humanities scholarship.

From piecing together fragments of ancient texts with language models to exploring the future of human-robot interactions, Princeton scholars aren’t just exploring what AI can do for the humanities. They’re uncovering what the humanities can do for AI.

Already, AI tools are appearing in all facets of our society and culture. “It’s the world that our kids are going to inherit,” said Meredith Martin, professor of English, faculty director of Princeton’s Center for Digital Humanities (CDH) and a grant recipient from the international Schmidt Sciences Humanities and AI Virtual Institute. “We should try our hardest to put the humanities into every aspect of AI development, not only in the input data and the interpretation of the results,” she said.

Princeton humanities scholars had already been using machine learning in their research — largely by partnering with the robust community of humanities research software engineers and digital humanities experts at CDH, established more than a decade ago. And now, with the AI Lab, they are proving to be some of the best positioned collaborators for the future of humanities and AI.

“Our goal in the AI Lab is to support the transformative impact of AI on research across the Princeton campus, and we’re excited about the many opportunities to do so in the humanities,” said AI Lab Director Tom Griffiths.

“The most valuable and productive relationship between artificial intelligence and humanistic research is collaboration,” said Rachael DeLue, director of the University’s sweeping new Humanities Initiative. Here, a sample of the scholarship now underway.

Insights into ancient texts

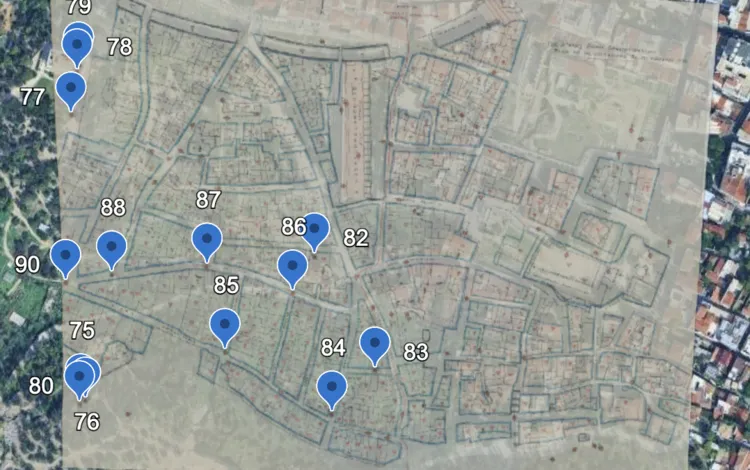

Photo by Matthew Raspanti, Office of Communications

Paul Vierthaler uses machine learning to study premodern Chinese books such as “Xianqing ouji 閒情偶寄 (Leisure Notes),” a collection of essays published in 1671, pictured at right.

Fifteen years ago, Paul Vierthaler became fascinated by a particular figure common in Ming and Qing dynasty literature. Wei Zhongxian, a late Ming dynasty eunuch, nearly took over the imperial government in the 1620s. His infamy became such that within a year of his death, half a dozen novels and unofficial histories on his exploits had already been published. “I became really interested in how people talk about historical events within so-called ‘unreliable genres,’” said Vierthaler.

Studying the historical figure of Wei and the stories he’d inspired raised new questions for Vierthaler: How common were these types of narratives in imperial China? What more could be learned from studying bibliographic information, and how could he even begin to study that information at scale? “I realized, if I wanted to try to get a grip on how these kinds of narratives existed in the Chinese literary tradition, I needed to start thinking more broadly,” he said.

The realization led Vierthaler, now an assistant professor of Chinese literature and interdisciplinary data science, to the global catalog WorldCat, which holds digitized bibliographic records from tens of thousands of libraries around the world. To grapple with the vast amounts of data, Vierthaler turned to computational methods and machine learning/AI — which has altered the scope of his work.

Using machine learning to analyze and extract data from written descriptions of premodern Chinese books — which may include information on author, illustrations, content and more — Vierthaler initially set out to understand whether genres containing suspect stories about historical events increased or decreased in popularity over time. But soon his focus expanded. “It’s exploded into a much larger project when I began to apply these same tools to digitized versions of the books themselves,” he said.

More recently he has been using machine learning methods to study the likely authorship of anonymously published works and to detect historical documents inserted into novels. With the advent of transformer-based language models, Vierthaler is now training specialized language models on premodern Chinese corpora, hoping to pick up minute nuances in the texts he studies. “There’s a movement now in the humanities aimed at training much more targeted, bespoke smaller language models,” Vierthaler said.

Instead of training bigger and bigger models, Vierthaler’s work has wider implications for using custom training sets to capture and retain the nuance necessary for the study of culture. “Humanities scholars can bring an understanding of the historical background and composition of training materials, which can help identify blind spots that might have otherwise been missed,” he said.

That same movement for highly specialized language models drives the work of Marina Rustow, the Khedouri A. Zilkha Professor of Jewish Civilization in the Near East and professor of Near Eastern studies and history. Rustow is training a model designed to transcribe fragments of medieval texts — and save humans time-consuming, painstaking labor.

Geniza fragment courtesy of Cambridge University Library. Photo: Sameer A. Khan.

Left: A government decree from the Fatimid period in Egypt (969–1171) shows the original Arabic inscription with wide line spacing. Right: Marina Rustow.

Rustow runs the Geniza Lab, a group dedicated to studying an enormous cache of paper and parchment recovered from a medieval synagogue in Cairo. The documents are unique because, unlike most ancient texts preserved today, they’re not the work of society’s elites and philosophers. They’re everyday records from the masses, things like complaints about business travel, heated personal letters and descriptions of stomachaches.

The fragments offer a broad perspective of society in that period, which is invaluable to Rustow as a social historian of the medieval Middle East. But they’re written in dialects of Arabic, Hebrew and Aramaic no one speaks today and in handwriting that can border on illegible.

Rustow said it can take her two full days to transcribe just one document. She hopes the machine learning model she’s training will turn that around for the 36,000 Geniza fragments that she and her colleagues have uploaded to the Princeton Geniza Project database for public access.

Since the Geniza’s discovery in 1896, “it has taken researchers 130 years to transcribe 7,000 documents, and we have another 29,000 documents to transcribe,” said Rustow. With machine learning, she hopes to save scholars 530 years of transcription drudgery.

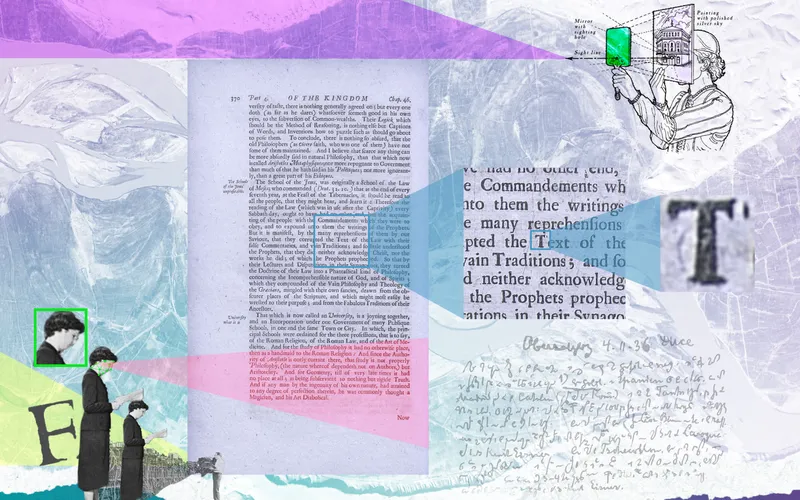

Like Rustow, Barbara Graziosi is on a mission to make premodern texts free and accessible for all. “Ideally, I’d like to see everything that we have from before the invention of printing preserved, made accessible, translated, well edited, well understood and well studied,” said Graziosi, the Ewing Professor of Greek Language and Literature and professor of classics.

Photos by Denise Applewhite, Office of Communications

Barbara Graziosi uses AI as a "collaborator" to study ancient texts, including this 13th-century Byzantine manuscript of Aristotle's "Organon" from Princeton's Special Collections.

Graziosi is contributing to that mission by filling in the gaps of fragmented ancient Greek text. Over millennia, words and phrases written on documents are lost, chewed away by mice, eroded by moisture or obscured by stains. When a student approached her and suggested — before the advent of ChatGPT — that language modeling could generate suggestions to fill the gaps in these papyri, Graziosi began work on a machine learning tool attuned to the nuances of ancient Greek. The result of that work is the Logion Project, which Graziosi leads.

Graziosi said AI works best as a collaborator for humanists, not a replacement for highly trained scholars who dedicate years to studying these difficult texts. The tool Graziosi developed provides several suggested words to fill a given gap in the text. Seeing multiple suggestions can jog the thinking of a scholar who might face a block after spending hours reading and rereading the same passage.

The humanities, as Graziosi sees it, can help shape a future where the strengths of humans and the strengths of machines work in harmonious collaboration. “It’s very important that we keep the conversation going and that we respect human expertise as well as machine confidence,” she said. “I hope more humanists will get involved with AI because their perspective is exactly what’s needed now.”

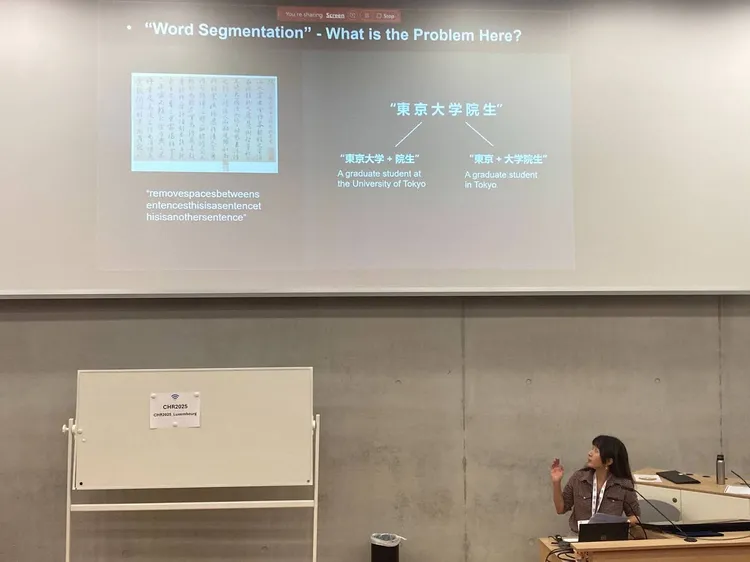

Modern-language applications

When Happy Buzaaba moved to Japan in 2015 to study for his Ph.D. at the University of Tsukuba, he couldn’t speak any Japanese.

“I had to rely on translation apps for my everyday life,” said Buzaaba, who is now an associate research scholar at Princeton Language and Intelligence with affiliations to the Center for Digital Humanities, the African Humanities Colloquium, the Princeton Institute for International and Regional Studies (PIIRS), and the Africa World Initiative.

Japanese-to-English translation is widely available, as both are well-studied languages with vast digital footprints. But some languages — in particular, many of those spoken in Africa — have a scant internet presence on which to train AI models. “I started thinking, imagine you went to a country where they speak a language that you don’t understand, and it’s also not supported by any existing technology,” said Buzaaba.

With computational linguist Christiane Fellbaum, Buzaaba is now introducing African languages to LLMs by creating large collections of syntactically annotated text, called treebanks, for 11 African languages.

The idea is that the rich annotations, filled with linguistic knowledge, can be used to train LLMs on the African languages, even though there’s not as much text as what’s available for Japanese or English. “We can actually create models that perform well on these languages, even with less amount of data,” Buzaaba said.

He and his colleagues have already released three African language models, which benchmarks show to be the best performing models of their kind. “The main goal here is not just creating tools, but accessibility,” he said. He has also brought this work into the classroom, on campus and in a PIIRS Global Seminar in Kenya.

AI in arts and architecture

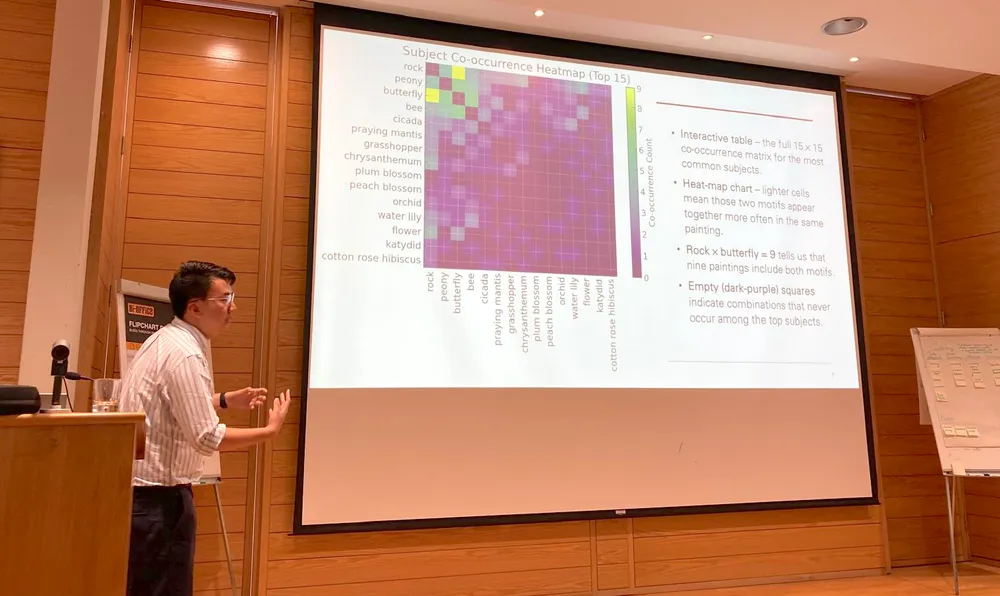

Photo by Matthew Raspanti, Office of Communications

Elizabeth Margulis uses AI to study how people describe their musical experiences in her Music Cognition lab (pictured at right: Itamar Jalon, a postdoc in psychology and music).

Humanities faculty in music, creative writing and architecture, among other disciplines, are using AI to understand the very essence of human creativity and to inform new work.

Elizabeth Margulis, a professor of music and acting department chair, is trying to understand how music shapes our emotions, imaginings and the thoughts that arise when we let our minds wander. Machine learning, she said, has been instrumental in advancing the studies she conducts for her Music Cognition Lab.

Margulis uses AI to study how people describe their musical experiences. “Where machine learning has been really helpful is giving us a way into unconstrained, free-response descriptions of what music evokes,” she said.

Researchers at the Music Cognition Lab collect these responses from volunteers, who enter a booth, listen to a musical excerpt, and then describe in writing the imaginative scenarios and emotions that arise. The lab has also worked in collaboration with Princeton University Concerts. At a Takács Quartet performance this past spring, the researchers gathered free-response descriptions from hundreds of concertgoers.

With the help of large language models, Margulis and her team analyze the descriptions, looking for patterns they might not have elucidated without the help of AI tools. “What’s so cool about machine learning is it helps us see structure in what seem like singular, subjective experiences,” said Margulis.

What she’s found so far is that people from different cultures frequently have remarkably different emotional and imaginative reactions after listening to the same piece of music. For example, one atonal excerpt by Anton Webern often conjured up a sense of impending doom for English speakers from the American Midwest. However, Dong speakers from the Guizhou province in China tended to imagine joyfully playing outside with friends.

Margulis hopes this work opens new avenues for understanding spontaneous thought in a way that could be applied to clinical settings somewhere down the road. “Think about ADHD or anxiety — both have these components that reside in patterns of spontaneous thought,” said Margulis. “Music gives us a powerful way to study the susceptibility of those thoughts to perceptual influence.”

A.M. Homes, professor of the practice in creative writing and the Lewis Center for the Arts, has been doing a lot thinking herself lately about AI. “I’m one of the writers whose books have been fed to AI to train on,” Homes said. “I sit on the Writers Guild of America’s Council on AI, and we are very concerned about how AI is being used in the entertainment world.”

Photo by Matthew Raspanti, Office of Communications

A.M. Homes in creative writing is working on a novel that explores themes of grief and what could happen when people turn to artificial intelligence for comfort.

To navigate this complicated moment of murky boundaries surrounding AI use, Homes is doing what she does best — writing about it. AI isn’t a tool she uses in her creative life; instead she’s working on a novel that interweaves themes of grief and explores what could happen when people turn to artificial intelligence for comfort.

Homes is a fiction writer who taps into the ideas percolating through society and culture at large. She sees the author’s role as being an artist who conceptualizes worlds and futures that don’t exist, inviting readers to think critically about the one they live in. “Whenever I’m writing something, what I really want to inspire is discussion and conversation,” she said.

In Arash Adel’s ideal future, humans and AI aren’t at creative odds but work together. While pursuing his Ph.D. at ETH Zurich, Adel focused on computational design and robotic integration into architecture construction. “But I wondered about the role of humans,” he said.

From L to R: Photos by Daniel Ruan and Bob Berg; courtesy of Arash Adel

Arash Adel and his team have recently built Timbrelyn, a robotically fabricated structure on the historic grounds of the 1969 Woodstock Festival in Bethel, N.Y.

Now an assistant professor in the School of Architecture with an affiliation at Princeton Robotics, Adel investigates human-robot collaboration where people supervise and instruct while robots perform some of the physically demanding and potentially dangerous construction tasks. This type of human-robot partnership, he said, is driven forward with AI models.

In 2024, Adel’s research group put this approach into practice for their Timbrelyn installation on the grounds of the 1969 Woodstock Festival in Bethel, N.Y., a raised wooden platform created from intricate layers of lumber. Using AI vision, robots scanned inventories of reclaimed and new lumber to identify wood elements that met design specifications while minimizing waste. After selection, the robots grasped and processed the elements using a saw before assembling them with a human collaborator.

Adel and his group are now working on a project that involves AI assisting in the design process as well. The goal is to ultimately develop a pipeline where humans and robots collaborate from inception to final construction.

“Humans are very intuitive, but we struggle to process large amounts of information at once,” said Adel. “The role of the AI is to augment human creativity.”

Connecting engineers and humanists: the Center for Digital Humanities

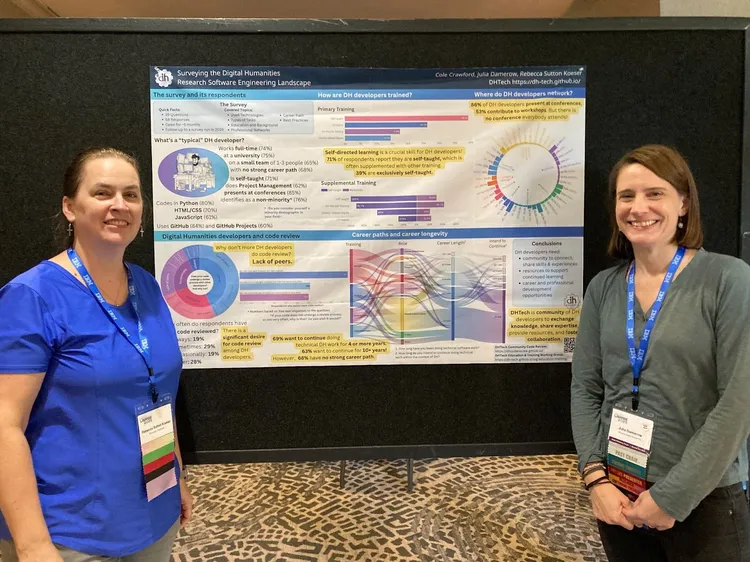

Photo by Kristopher Johnson

The Princeton Prosody Archive (left) is a searchable database of thousands of English-language digitized works published between 1559 and 1928, directed by Meredith Martin (right).

By the time ChatGPT exploded onto the scene in 2022, the Center for Digital Humanities had already been situated at the cutting edge of humanities-technology collaboration for the better part of a decade.

The center equips Princeton humanities faculty to thrive in a tech-dominated landscape, connecting them with software engineers who build the bespoke software that underlies projects (like Rustow’s and Graziosi’s) and teaching humanists and software engineers how to successfully collaborate.

“We at CDH had already built the necessary collaborative infrastructure for projects involving both software engineers and humanists,” Martin said. With the rapid proliferation of generative AI tools, she has noticed a surge of humanities scholars approaching CDH with questions about how the new technology might transform their work.

There are obvious advantages to AI: faster processing of larger datasets, high performance computing, quicker pattern recognition. But these technological leaps aren’t enough on their own, Martin said. “Humanists have to bring a lot of knowledge to that interaction for it to work out.”

For that reason, the staff at CDH think carefully about how machine learning might fit into a research project and whether a particular approach would be the right fit for their question. At the same time, Martin sees an opportunity for humanists themselves to shape the AI tools.

“There’s no reason why humanists can’t feel empowered to build better models, to participate in model architecture, to think about the kinds of data on which various models are trained and why,” she said.

This desire to bring humanists into the AI fold helped inspire a three-part project developed by CDH that spans the 2025-26 academic year and beyond. The project, Modeling Culture: New Humanities Practices in the Age of AI, brings together Princeton faculty and researchers from other universities for a seminar series to think critically about AI.

Martin ran one of the seminars this fall with Matthew Jones from the Department of History and Andrew Janco, a digital scholarship specialist at Firestone Library, on the problems and questions of modeling. “The main feeling has been one of real empowerment and excitement,” said Martin. “In the room, you can feel people leaning forward.”